OpenAI Patches ChatGPT Data Exfiltration Flaw and Codex GitHub Token Vulnerability

OpenAI صلحات ثغرة خطيرة ديال تسريب البيانات فـ ChatGPT وثغرة فـ GitHub Token فـ Codex

OpenAI Patches Critical Data Exfiltration Flaw in ChatGPT and GitHub Token Vulnerability in Codex

TL;DR

OpenAI has addressed two significant security vulnerabilities: a "covert transport mechanism" in ChatGPT that allowed sensitive data exfiltration via DNS requests, and a command injection flaw in Codex that could lead to the theft of GitHub User Access Tokens. Both issues have been patched as of February 2026, with no evidence of malicious exploitation.

Introduction

As AI models evolve into full-scale computing environments, their security architecture is facing unprecedented scrutiny. Recent findings from cybersecurity firms Check Point and BeyondTrust have revealed critical vulnerabilities within OpenAI’s ecosystem. These flaws allowed for the silent exfiltration of user data from ChatGPT and the compromise of GitHub credentials through the Codex software engineering agent.

The ChatGPT Side-Channel: Exfiltration via DNS

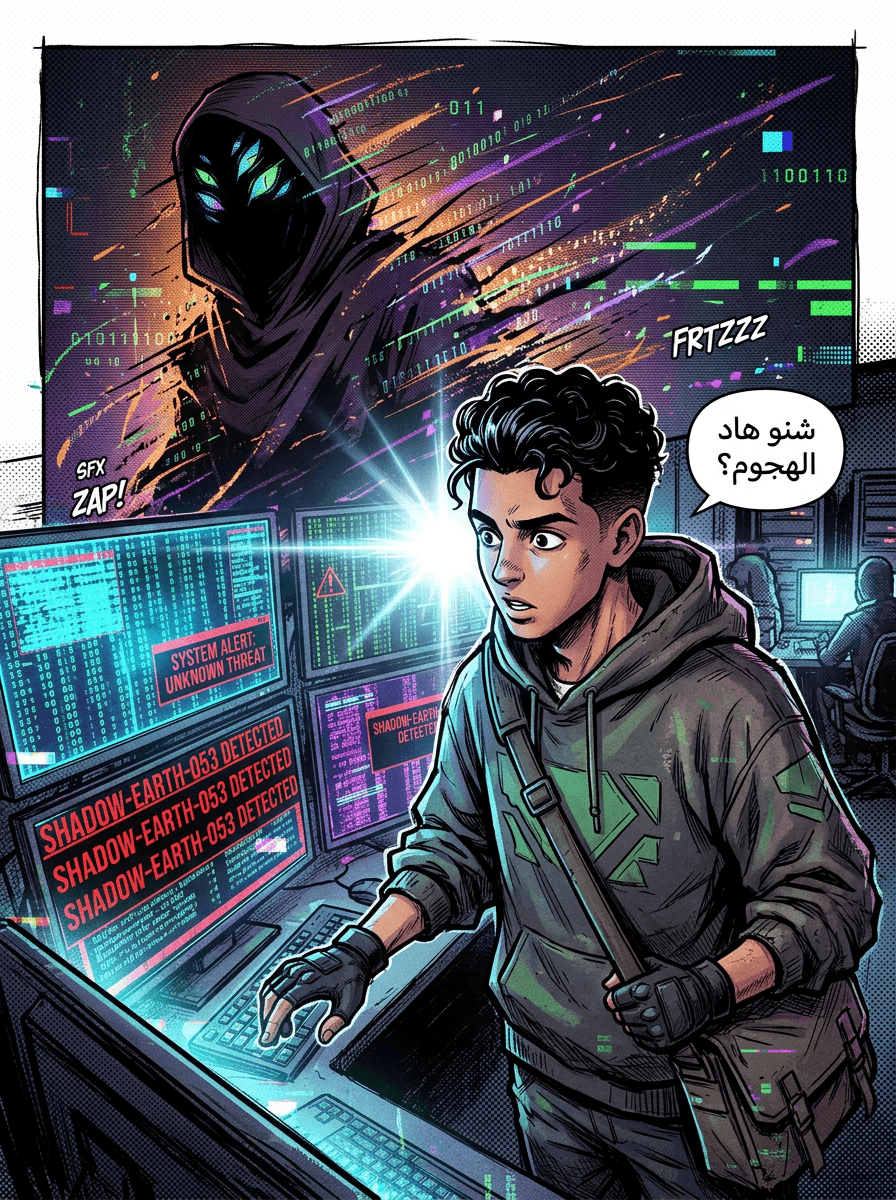

According to research published by Check Point, a previously unknown vulnerability in ChatGPT allowed attackers to bypass standard security guardrails to steal sensitive conversation data.

How the Attack Worked

While ChatGPT is designed with protections to prevent unauthorized outbound network requests, researchers discovered a side channel originating from the Linux runtime used for code execution and data analysis.

The exploit functioned by:

- Malicious Prompts: An attacker could trick a user into entering a malicious prompt or embed the logic within a "backdoored" custom GPT.

- DNS Masking: The vulnerability abused a hidden DNS-based communication path. By encoding sensitive information (such as uploaded files or messages) into DNS requests, the system acted as a "covert transport mechanism."

- Bypassing Guardrails: Because the AI environment assumed it was isolated, it did not recognize these DNS requests as an external data transfer. Consequently, no warnings were triggered, and no user consent was requested.

Beyond data theft, researchers noted that this same communication path could be used to establish a remote shell inside the Linux runtime, allowing for arbitrary command execution.

Codex Command Injection and GitHub Token Theft

In a separate discovery by BeyondTrust Phantom Labs, a critical command injection vulnerability was identified in OpenAI's Codex. This flaw affected the ChatGPT website, Codex CLI, Codex SDK, and the Codex IDE Extension.

The Mechanism of Compromise

The vulnerability was rooted in improper input sanitization of GitHub branch names.

- The Exploit: An attacker could smuggle arbitrary commands through the

branch nameparameter in an HTTPS POST request to the backend Codex API. - The Result: When Codex executed a task associated with that branch, the malicious payload would run inside the agent's container. This allowed researchers to retrieve the victim’s GitHub User Access Token—the same credentials Codex uses to authenticate.

- Lateral Movement: With these tokens, an attacker could achieve read/write access to a victim’s entire codebase, representing a scalable attack path into enterprise systems.

Timeline of Remediation

OpenAI has moved to patch these vulnerabilities following responsible disclosures:

- Codex Flaw: Reported on December 16, 2025, and patched by OpenAI on February 5, 2026.

- ChatGPT Exfiltration Flaw: Addressed by OpenAI on February 20, 2026.

According to the reports, there is currently no evidence that either of these vulnerabilities was exploited by malicious actors before they were fixed.

The Broader Threat Landscape

These findings arrive alongside a rise in "prompt poaching" via malicious browser extensions. Threat actors are increasingly using add-ons to silently siphon AI chatbot conversations, leading to risks of identity theft and the exposure of intellectual property.

Eli Smadja, head of research at Check Point Research, emphasized that organizations cannot assume AI tools are secure by default. "As AI platforms evolve into full computing environments... native security controls are no longer sufficient on their own," Smadja stated.

Conclusion

The discovery of these vulnerabilities underscores a critical shift in AI security. As agents like Codex and ChatGPT gain deeper integration into developer workflows and enterprise environments, the containers they inhabit must be treated with the same rigorous security standards as traditional application boundaries. For organizations, this highlights the necessity of independent visibility and layered protection to counter prompt injections and unexpected side-channel leaks.

Source: https://thehackernews.com/2026/03/openai-patches-chatgpt-data.html