Bridging the AI Agent Authority Gap: Continuous Observability as the Decision Engine

كيفاش نسدو "فجوة السلطة" ديال AI Agents: علاش المراقبة المستمرة هي الأولوية الجديدة للي كيسيرو التكنولوجيا فـالمغرب

Bridging the AI Agent Authority Gap: Why Continuous Observability is the New Security Priority for Moroccan Tech Leaders

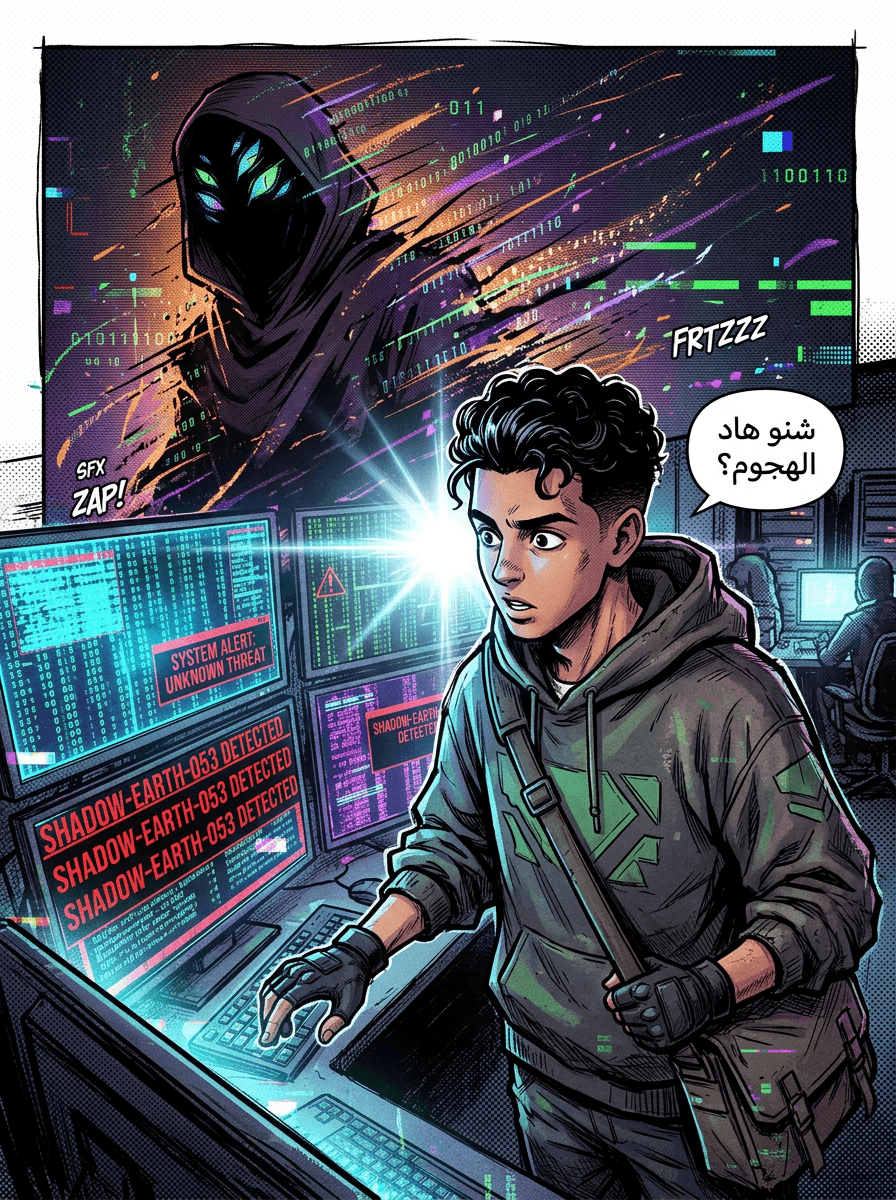

As Moroccan enterprises and startups increasingly look toward AI agents to automate workflows and optimize DevOps pipelines, a critical security challenge is emerging. While many local teams are focused on the Large Language Models (LLMs) themselves, a more structural vulnerability is surfacing: The AI Agent Authority Gap.

This gap represents a fundamental shift in how we manage Identity and Access Management (IAM). It is no longer enough to ask "Who has access?" We must now ask "What authority is being delegated, under what conditions, and by whom?"

TL;DR: The Core Challenge

AI agents are not independent actors; they inherit authority from humans, bots, and service accounts. This creates a "delegation gap" where agents can amplify existing security weaknesses—or "identity dark matter"—hidden within your infrastructure. Closing this gap requires moving beyond static IAM to a model of continuous observability and dynamic delegation control.

Understanding the Delegation Gap

According to research from Orchid recently featured in The Hacker News (April 2026), AI agents represent a structural security gap because they act as delegated identities. Unlike a standard user who logs in with specific credentials, an AI agent is triggered or empowered by existing enterprise identities.

Because these agents are inseparable from the actors that invoke them, they are fundamentally different from both traditional software and human users. They exist in a middle ground where their power is entirely dependent on the "delegator"—be it a developer, a machine identity, or a service account.

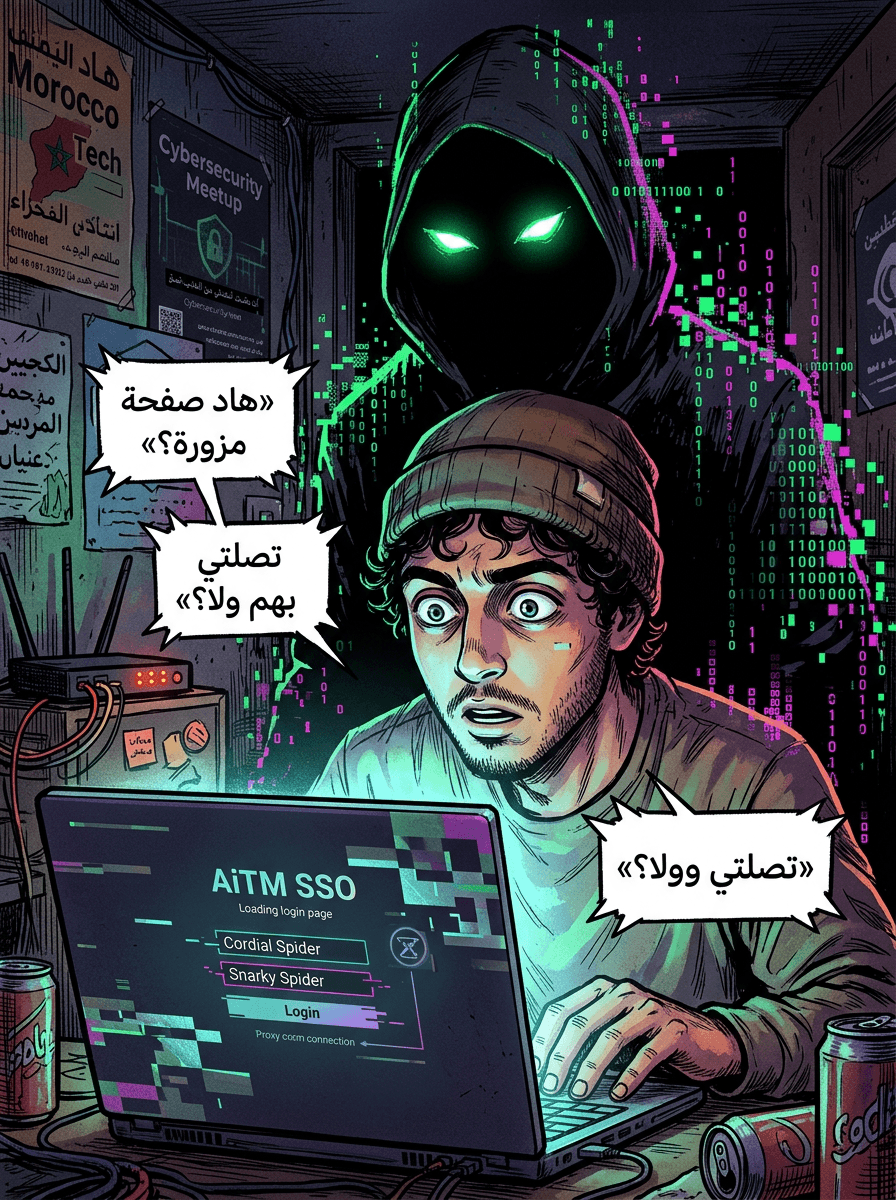

The Problem of 'Identity Dark Matter'

In the context of Moroccan IT environments—where legacy systems often coexist with modern cloud APIs—enterprises frequently suffer from what Orchid calls "identity dark matter." This refers to unmanaged authority that exists outside the view of traditional IAM systems.

This dark matter includes:

- Fragmented identities across various APIs.

- Credentials embedded directly in code.

- Unmanaged service accounts that accumulate risk over time.

If an AI agent is introduced into an environment with significant identity dark matter, it doesn't just operate within those flaws; it amplifies them. The agent becomes an efficient tool for exploiting hidden permissions and execution paths that security teams may not even know exist.

Shifting to Continuous Observability

To bridge this gap, the security strategy must be sequenced correctly. You cannot govern an AI agent in isolation; you must first govern the identities that delegate authority to it.

Orchid’s Continuous Observability Platform proposes a model that establishes a baseline of real identity behavior. Instead of relying on static policy assumptions—which are often incomplete or outdated—this approach illuminates how human and machine identities actually authenticate and execute workflows in real-time. By reducing identity dark matter, organizations ensure that agents are not inheriting "broken" authority models.

From Static Permissions to Dynamic Governance

Traditional IAM was designed for a simpler time. For AI agents, we need Dynamic Sequential Delegation Control. This means the agent’s authority should be governed by:

- The Posture of the Delegator: If a human user has a "weak posture" (e.g., risky behavior or excessive hidden access), the AI agent they trigger should have restricted authority.

- Intent and Context: The system must evaluate the specific intent behind a requested action and the context of the target application.

- Real-Time Telemetry: Using live feeds to determine if an agent should be allowed to act, restricted to a recommendation-only role, or stopped entirely.

In this framework, an agent's nominal permissions are less important than the real-time state of the actor delegating power to it.

Practical Steps for Implementation

For Moroccan tech teams looking to adopt these technologies safely, the focus should be on the following mitigations:

- Illuminate all identities: Map out both human and machine identities across managed and unmanaged environments.

- Baseline behavior: Use continuous observability to understand actual workflow execution rather than just "on-paper" permissions.

- Map agent-to-workflow: Explicitly link agent identities to the specific applications they touch and the intent patterns they exhibit.

- Enforce based on delegator risk: Implement systems that can throttle agent authority if the delegating service account or human user shows signs of compromise.

Conclusion and Practical Uncertainties

The transition to AI agents is inevitable, but it requires a shift from "access control" to "authority governance." By focusing on the delegation chain, Moroccan enterprises can prevent AI from becoming a liability.

However, practitioners should remain aware of certain technical complexities. While the concept of "closing the gap" is clear, the specific technical integration methods between observability platforms like Orchid and legacy IAM providers can vary. Additionally, the industry is still exploring the scalability of real-time intent analysis at "machine speed" for very large-scale enterprise deployments.

Source: Bridging the AI Agent Authority Gap: Continuous Observability as the Decision Engine (The Hacker News, April 2026)