AI-Driven Pushpaganda Scam Exploits Google Discover to Spread Scareware and Ad Fraud

لصحّة ديال "Pushpaganda": كيفاش النصب فـ SEO بذكاء اصطناعي كيخترق Google Discover

The "Pushpaganda" Threat: How AI-Driven SEO Fraud Hijacks Google Discover

TL;DR

Cybersecurity researchers have uncovered a massive ad fraud and scareware campaign dubbed "Pushpaganda." The operation uses AI-generated news and SEO poisoning to infiltrate Google Discover feeds, tricking users into enabling push notifications that lead to financial scams and fraudulent ad revenue.

A sophisticated new ad fraud scheme is currently exploiting the intersection of artificial intelligence and search engine optimization (SEO) to target mobile users. Known as "Pushpaganda," this campaign weaponizes Google’s personalized content feed, Google Discover, to distribute scareware and drive illicit financial gain.

The operation was identified and unmasked by the Satori Threat Intelligence and Research Team at HUMAN, who highlighted the campaign's ability to turn trusted mobile discovery surfaces into delivery vehicles for malicious content.

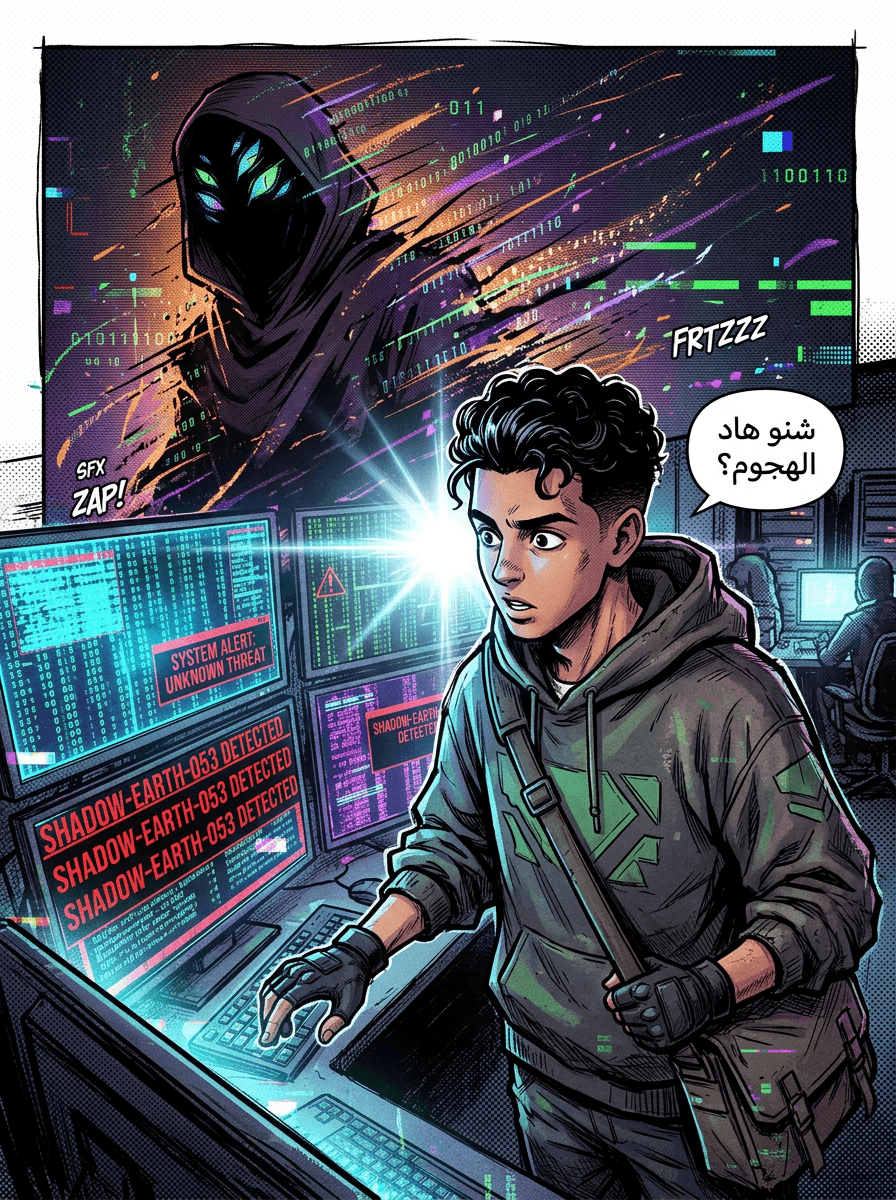

The Mechanics of "Pushpaganda"

The campaign’s name is a portmanteau of "push notifications" and "propaganda," reflecting its core strategy. The threat actors utilize a multi-stage attack vector to deceive users:

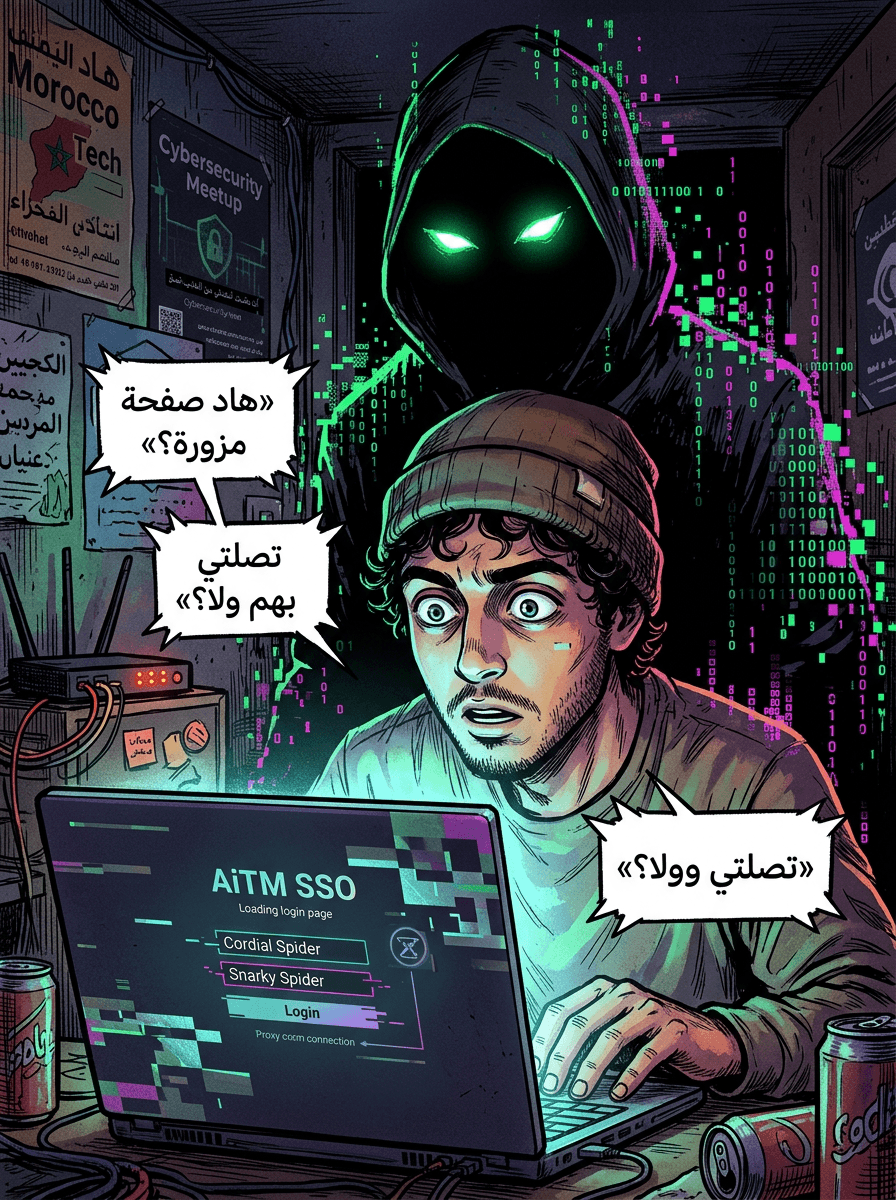

- AI Content Generation & SEO Poisoning: Attackers use generative AI to create high volumes of deceptive news stories. By employing SEO poisoning techniques, they successfully inject these "news" articles into Google Discover feeds on Android devices and Chrome browsers.

- The Hook: When a user clicks a story in their personalized feed, they are directed to a domain controlled by the attacker.

- Notification Hijacking: Once on the site, the user is coerced into enabling browser-based push notifications.

- Scareware Delivery: Once permissions are granted, the actors send persistent, alarming notifications—such as fake legal threats or urgent security warnings—to the user's device.

- Monetization: Clicking these notifications redirects the user to further actor-controlled sites (ghost sites) filled with ads. This generates "invalid organic traffic," allowing the scammers to collect ad revenue and direct users toward further financial scams or deepfake content.

Scale and Global Reach

The Pushpaganda operation has reached an immense scale. According to HUMAN, the campaign peaked at approximately 240 million bid requests associated with 113 domains over just a seven-day period.

While the campaign was initially observed targeting users in India, it has rapidly expanded its footprint. Researchers have now documented activity targeting users in:

- The United States

- The United Kingdom

- Canada

- Australia

- South Africa

The Role of AI in Scaled Content Abuse

This campaign underscores a growing trend in the threat landscape: the use of AI to bypass traditional quality filters. By using AI to produce content that appears legitimate to search algorithms, threat actors can mask "scaled content abuse."

Google’s spam policies specifically prohibit the use of AI to manipulate search rankings or create pages that offer no value to the user. In response to this specific campaign, a Google spokesperson stated that the company has launched a fix for the spam issue and continues to use automated systems to flag policy-violating content.

Connections to "Low5" and Ad Fraud Infrastructure

The discovery of Pushpaganda follows closely behind another major HUMAN investigation into a scheme called Low5. That operation involved more than 3,000 domains and 63 Android apps, generating up to 2 billion bid requests daily.

Researchers noted that these "cashout sites" or "ghost sites" often share a monetization layer. This means that even if a specific campaign like Pushpaganda is disrupted, the underlying infrastructure—the network of domains used to collect ad money—can be reused by other threat actors for different schemes.

Expert Analysis: Why Push Notifications?

The effectiveness of the scheme lies in psychological manipulation. Lindsay Kaye, Vice President of Threat Intelligence at HUMAN Security, noted that push notifications create a false sense of urgency.

"In many cases, users are quick to click, either to make them go away or to get more information, making them an effective tool in a malware author's arsenal," Kaye explained.

Conclusion

Pushpaganda represents a evolution in ad fraud, where AI is used not just to create malware, but to fabricate the "organic" interest needed to bypass security perimeters like Google Discover. While Google has implemented fixes to mitigate this specific spam wave, the persistence of the underlying ad-fraud infrastructure suggests that mobile users must remain vigilant against unsolicited notification prompts and "alarming" news alerts.

Source: The Hacker News